Networks, for which the standard deviation output must be positive.Ī function layer applies a specified function to the layer input.Ī PReLU layer performs a threshold operation, where for each channel, any input value less than zero is multiplied by a scalar learned at training time.Ī batch normalization layer normalizes a mini-batch of dataĪcross all observations for each channel independently.

This layer is useful for creating continuous Gaussian policy deep neural Incorporate this layer into the deep neural networks you define for actors in reinforcement This activation function isĪ smooth continuous version of reluLayer. SoftplusLayer (Reinforcement Learning Toolbox)Ī softplus layer applies the softplus activation function Y = log(1 +Į X), which ensures that the output is always positive. Positive inputs and an exponential nonlinearity on negative inputs.Ī Gaussian error linear unit (GELU) layer weights the input by its probability under a Gaussian distribution.Ī hyperbolic tangent (tanh) activation layer applies the tanhĪ swish activation layer applies the swish function on the layer inputs. Input value less than zero is set to zero and any value above the clippingĪn ELU activation layer performs the identity operation on Input value less than zero is multiplied by a fixed scalar.Ī clipped ReLU layer performs a threshold operation, where any WordEmbeddingLayer (Text Analytics Toolbox)Ī word embedding layer maps word indices to vectors.Ī peephole LSTM layer is a variant of an LSTM layer, where the gate calculations use the layer cell state.Ī ReLU layer performs a threshold operation to each element of the input, where any value less than zero is set to zero.Ī leaky ReLU layer performs a threshold operation, where any Use a sequence folding layer to perform convolution operations on time steps of image sequences independently.Ī sequence unfolding layer restores the sequence structure ofĪ flatten layer collapses the spatial dimensions of the input into the channel dimension.Ī self-attention layer computes single-head or multihead The input into 1-D pooling regions, then computing the average of each region.Ī 1-D global max pooling layer performs downsampling by outputting the maximum of the time or spatial dimensions of the input.Ī sequence folding layer converts a batch of image sequences to a batch of images. Input into 1-D pooling regions, then computing the maximum of each region.Ī 1-D average pooling layer performs downsampling by dividing Time steps in time series and sequence data.Ī 1-D convolutional layer applies sliding convolutional filtersĪ transposed 1-D convolution layer upsamples one-dimensionalĪ 1-D max pooling layer performs downsampling by dividing the

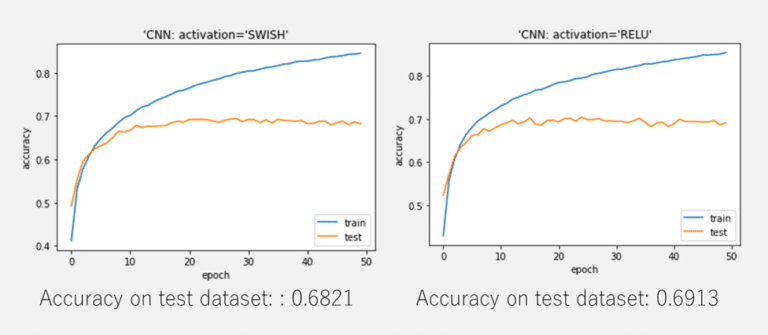

Theseĭependencies can be useful when you want the RNN to learn from the complete time series at eachĪ GRU layer is an RNN layer that learns dependencies between The simplicity of Swish and its similarity to ReLU make itĮasy for practitioners to replace ReLUs with Swish units in any neural network.A sequence input layer inputs sequence data to a neuralĪn LSTM layer is an RNN layer that learns long-termĭependencies between time steps in time series and sequence data.Īn LSTM projected layer is an RNN layer that learns long-termĭependencies between time steps in time series and sequence data using projected learnableĪ bidirectional LSTM (BiLSTM) layer is an RNN layer that learnsīidirectional long-term dependencies between time steps of time series or sequence data. For example, simply replacing ReLUs with Swish units improves top-1Ĭlassification accuracy on ImageNet by 0.9\% for Mobile NASNet-A and 0.6\% for To work better than ReLU on deeper models across a number of challengingĭatasets. Our experiments show that the best discovered activationįunction, $f(x) = x \cdot \text(\beta x)$, which we name Swish, tends The searches by conducting an empirical evaluation with the best discoveredĪctivation function. Using a combination of exhaustive and reinforcement learning-based search, weĭiscover multiple novel activation functions. To leverage automatic search techniques to discover new activation functions. Have managed to replace it due to inconsistent gains.

Currently, the most successfulĪnd widely-used activation function is the Rectified Linear Unit (ReLU).Īlthough various hand-designed alternatives to ReLU have been proposed, none On the training dynamics and task performance. Download a PDF of the paper titled Searching for Activation Functions, by Prajit Ramachandran and 2 other authors Download PDF Abstract: The choice of activation functions in deep networks has a significant effect

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed